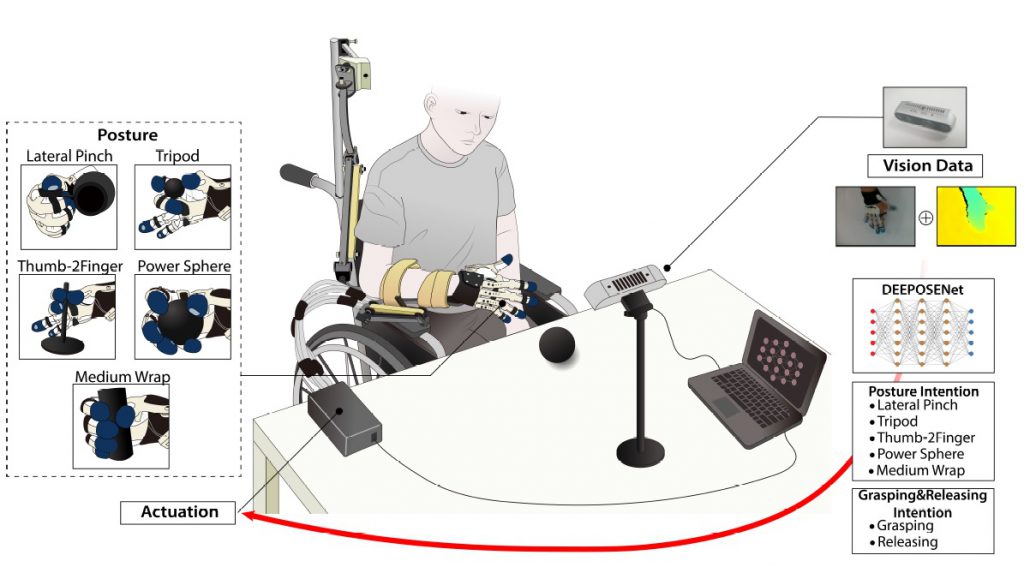

For stroke survivors, diminished hand functions limit their ability to perform Activities of Daily Living (ADLs). To help stroke survivors perform rehabilitation exercises, soft

robotic gloves have been utilized to assist them in actively practicing hand movements based on intentions expressed through biosignals, such as electromyogram and electroencephalogram. While practicing multiple hand postures can lead to an increased degree of motor function recovery, intention detection regarding

multiple hand postures remains challenging and often results in low classification performance during online tests; this can hinder stroke survivors from actively practicing various hand postures for rehabilitation. To address this, we propose DEpth

Enhanced hand POSturE intention Network (DEEPOSE-Net) that predicts users’ intentions regarding multiple hand postures. Our model analyzes a sequence of images and depths data observing users’ arm behavior and hand object-interactions to

predict the desired hand postures. This work is collaboration with Prof. Hyung Soon Park’s group at KAIST Mechanical Engineering.

Related publications

1.E Rho, H Lee, Y Lee, K Lee, J Mun, M Kim, D Kim, HS Park, S Jo, Multiple Hand Posture Rehabilitation System using Vision-Based Intention Detection and Soft Robotic Glove, IEEE Transactions on Industrial Informatics, 20:4, 6499-6509, April 2024 [LINK] [PDF]